What will you do if you get an error/exception in one of the processes involved in processing some order/transaction which got initiated from some upstream system and involves multiple systems/applications? Suppose, the processing failed at some intermediate point while interaction with multiple systems or applications was already completed before. For example; if your TIBCO solution is failing at a point where some database interaction was required but database appears non-responsive; will you just terminate the process and think of having the transaction re-initiated from the source/upstream system? Ideally, it shouldn’t be the case. A good implementation at the middleware should have capabilities to deal with such scenarios and should be able to retry at least to a certain number of times before calling it a failure.

In this post; I will be talking about the approaches that you can think of while implementing the retry mechanism in your TIBCO BW projects.

Multiple delayed retry attempts in same Process Instance

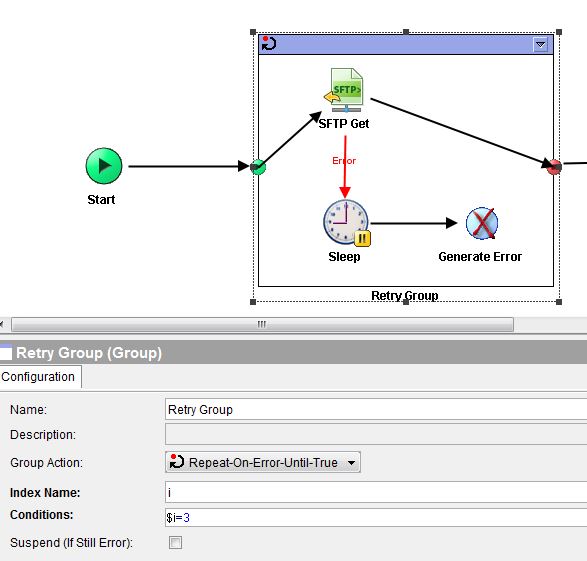

For certain types of errors like timeout errors; this is the most optimal approach. By just enclosing the desired activities (e.g. JDBC activities, FTP activities) in a “Repeat On Error Until True” group; you can retry x number of times if the activity inside the group fails.

In this type of retry implementations; It is recommended to put a sleep activity with some suitable wait time configuration to retry periodically.

As an example; you can see in below screenshot that a SFTP Get activity has been enclosed in a “Repeat on Error Until True” group which will try 3 times. Sleep activity has been put to specify some delay before the next retry.

Important Points regarding this retry approach:

Bear in mind that this type of repeat on error until true groups should be use with care keeping in mind that it may cause unnecessary processing delays for situations where errors are of a nature which is not self-corrective. For example; if the error is due to some misconfiguration of user credentials or some data issues; such errors can’t be automatically fixed and hence; any number of retries done will be futile. So the type of activities involved and specific types of errors for which retry mechanism can work should be carefully identified.

It is also important to note the transaction flows, transaction’s time and data criticality and communication mode before applying such retry mechanisms in processes. For example; synchronous communication model requires sooner response for end to end processing without any lags on the intermediate middleware layer.

Having said that; benefits associated with this type of in-process delay based retry implementation can’t be undermined. Transparently solving any network glitch related issues (e.g. timeouts) in such a way not only helps in avoiding unnecessary issue escalations but also results in less overheads on any upstream or downstream systems.

Retry in Separate Process Engine:

Imagine a scenario where your TIBCO solution is integrating multiple systems where async communication is taking place with EMS communication model. If there is certain process in the overall flow where you are facing a non-data issue (e.g. network issue) but immediate retries with repeat on error until true approach don’t help in getting the transaction completed; you can push the data to a EMS destination with all the necessary payload based on the processing completing till that point.

A generic retry process can be started at some defined time intervals (with timer as the process starter) and then having a “Get JMS Queue Message” activity to retrieve the queued message and then resume the processing.

The benefit of this approach is that this implementation in a separate engine won’t be consuming the same memory resources and we can have separate configurations specified in terms of memory, threads count etc. for this.

The drawback of this approach is that timer based process will run periodically even if we don’t have any queued messages in our retry queue. (however, this can be tweaked to cleanly end the process upon Get JMS Queue Message activity timeout.)

Watch below video on YouTube channel of TutorialsPedia about different Group Actions:

Great explanation and this is something which is widely used in organizations

Thank you sir for this explanation , very helpful Post(y)

How to add a retry mechanism based on particular error message.

means only for that particular error i want to retry for 3 times please help me with this